Today, we at Lightspeed are excited to announce our seed investment in Patronus AI, the first automated evaluation and security platform for large language models (LLMs).

The sobering reality is that LLMs can fail, spectacularly at times. Some of these failures are obvious; others are much more subtle. All of them are problematic roadblocks for enterprise adoption of AI.

LLMs can have any number of issues:

- Hallucination: LLMs are famous for their propensity to make things up, and confidently so. This is a non-starter for most enterprise use cases where accuracy is sacred.

- Safety: LLMs can leak private or business-sensitive information, and issues in their training data can lead to undesirable behavior and responses at runtime.

- Alignment: To be most useful, models need to be aligned to human needs and goals. However, alignment in an enterprise context could differ from the societal context.

Although many organizations are still wrapping their heads around the implications of AI for their business and the potential use cases, one thing is clear – once AI is deployed, it has to work, and it has to work well. As the FDA says, “safe and effective.”

As with any piece of software, before AI can be deployed in mission critical, production contexts, enterprises need assurances that it will perform robustly to their standards. Real world failure modes need to be identified well ahead of time and mitigated accordingly; performance on academic benchmarks doesn’t count. Evaluation and testing of LLMs is therefore a necessary prerequisite to serious enterprise adoption.

However, evaluation wasn’t a hot topic in AI discourse until recently. Understandably, it’s much more exciting to focus on AI’s successes than its failures. Cherry-picked examples of successful AI make for great Twitter demos, hype, and clicks.

Further, AI evaluation has historically been expensive, manual, and unscalable:

- Many organizations rely on costly armies of testers and external consultants to test and evaluate their models.

- Precious engineering time is spent manually creating test sets for LLMs. No rigorous standards exist for the enterprise context, leading each organization to haphazardly reinvent the wheel.

- These processes are inherently unscalable, driving organizations to test their models only periodically rather than continuously, slowing product and engineering velocity.

Patronus AI is changing this.

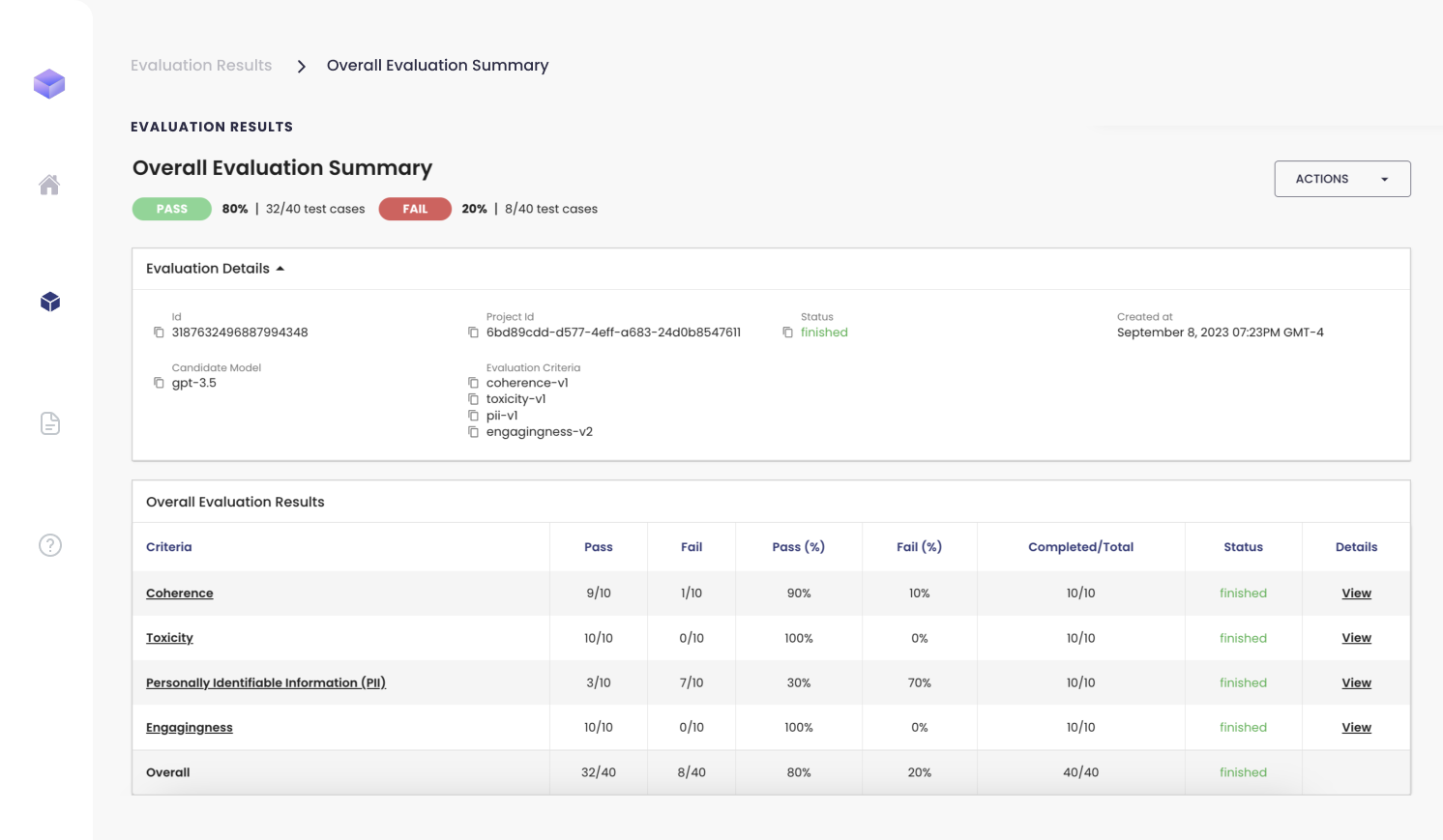

Patronus AI is the first automated evaluation and security platform for LLMs, enabling enterprises to use AI safely and confidently. With Patronus, users can evaluate any LLM (proprietary/open source, first/third party, etc) at scale. The platform scores model performance in real world scenarios, uses advanced techniques to adversarially test models for potential issues, and benchmarks them against each other to help customers find the best model for their use case. Through these capabilities, Patronus AI meaningfully reduces the risk of enterprise AI adoption.

Patronus’ founders, Anand Kannappan and Rebecca Qian, are experts in the space. Rebecca led responsible natural language processing (NLP) research at Meta AI, while Anand spearheaded the development of explainable ML frameworks at Meta Reality Labs. They’ve known each other for the better part of a decade and recognized early the challenges LLMs would pose for enterprise use cases.

Patronus AI has emerged as an early leader in LLM evaluation and security, partnering with leading AI organizations like Cohere, Nomic AI, Naologic, and others. The company is also building some exciting domain-specific features (e.g. financial services) in partnership with industry leaders, to be announced in the coming months.

At Lightspeed, we love to invest in ideas and themes that become more true over time, even if they’ve been under-appreciated in the past. LLM evaluation and security are themes that are only becoming more relevant and mission critical in the enterprise.

The team at Patronus AI understands and appreciates both the flaws of AI and its exciting potential. That’s why we are so excited to lead their $3M seed round, alongside others like Factorial Capital, Replit founder Amjad Masad, Gokul Rajaram, and a number of Fortune 500 executives and board members.

Authors