Today, we are excited to announce our investment into Langfuse and join Marc, Max & Clemens on the journey. We are leading the company’s $4 million seed round alongside our friends La Famiglia & Y Combinator.

Our investment into Langfuse is rooted in a deep thesis and the strong conviction that the analytics stack requires a complete overhaul to fully support generative models.

The crux lies in successfully integrating generative AI into production

The impact potential of generative models is by now clear for most — studies suggest worker’s productivity can be boosted by as much as 40 percent and will reshape from the ground up the way we consume, personalize, and interact digitally. Consequently, the world is racing to implement generative models into every application — leading the way to a new area of cognition in software.

While the impact and importance are clear, the everyday life of developers building with generative AI is still very difficult. Historical software development had a linear experience — input X resulted in output Y, every time. With the non-deterministic nature of generative models, this has fundamentally changed. Simple debugging won’t work. Developers need a new stack of tooling to support them in analyzing, understanding, and optimizing model behavior. Speaking with countless founders building in the space, we have seen this challenge emerge as one of the largest hurdles to putting generative models into production.

A clear need for an independent third-party enablement layer

This is where Langfuse comes into play. Our thesis behind Langfuse is based on the belief that the new software stack for generative models will be shaped by an independent third-party middleware that supports application developers in bringing the best experience to their end customers. Only with an independent third-party provider, application developers will be able to get an unbiased, transparent view of LLM model parameters and combine it with individualized data collected firsthand from customers. This is what Langfuse will enable every application developer to do.

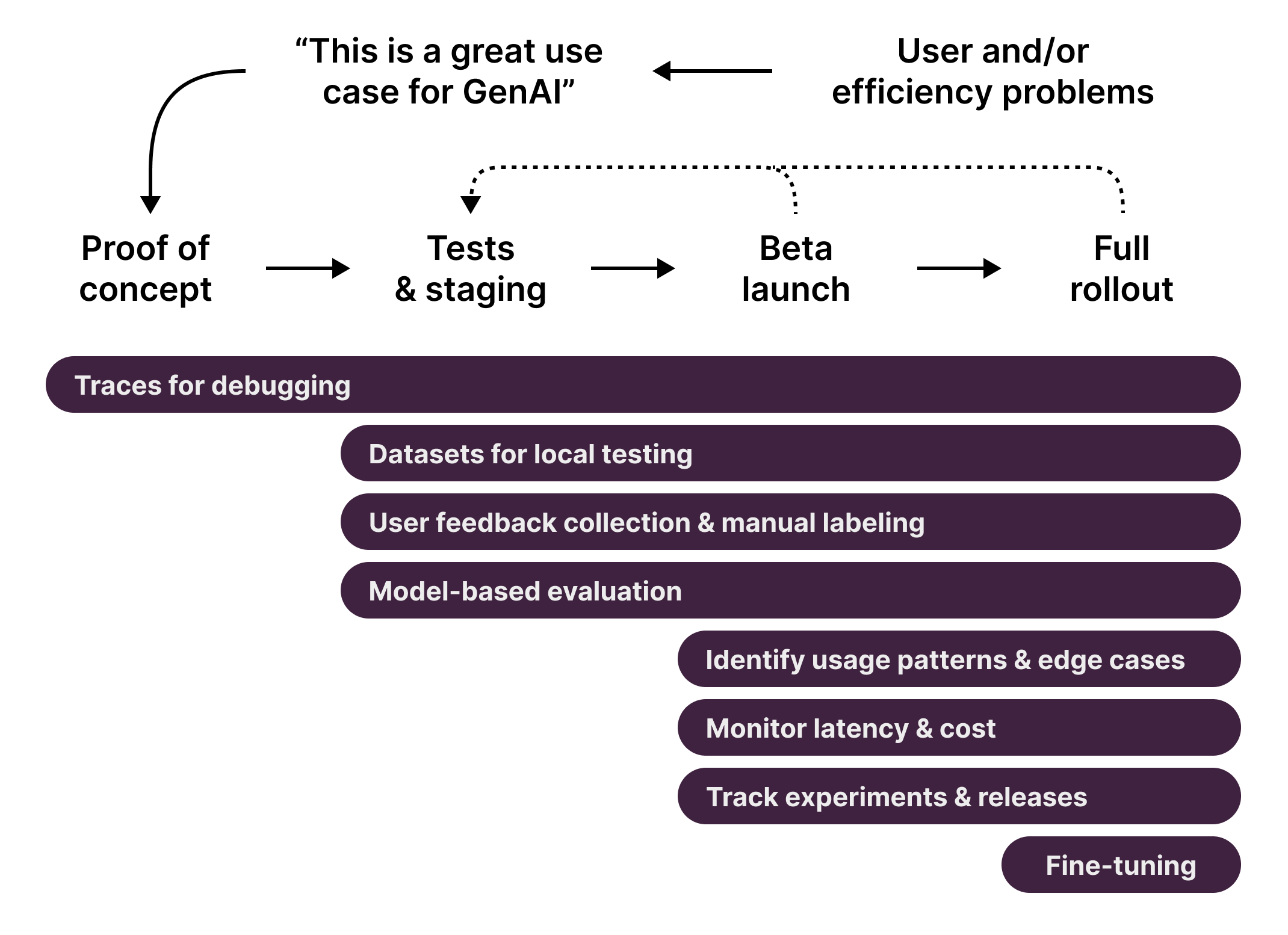

Langfuse is your companion for LLM application development from the ground up. From Day 1 of the experimentation phases, Langfuse simplifies tracing and captures the full context of an LLM execution with its client SDKs. Based on a transparent understanding of model and application behaviors and weaknesses, teams can then make informed decisions in the triangle of cost, quality and latency to build the most tailored LLM experience into their product. Consequently, Langfuse helps product and engineering teams embrace the iterative process of building LLM-based applications.

Watch their demo and play around with a live demo of Langfuse here.

A team we have been waiting to work with

We have been following the team for quite some time. I first met Marc and Max 5 years back in Munich’s founders scene, specifically at the Center of Digital Technology Management — where many great German founders have their roots, such as fellow-Lightspeed Portfolio Founder Hanno Renner. Marc is one of those rare founders who brings a combination of first-principle thinking and being able to zoom-in and out simultaneously. Max brings the grit to constantly provide better solutions — his desire led him to rise quickly in the engineering ranks at Trade Republic. A self-taught coder, Max brings this mindset of taking action, consistently spinning up workshops to develop himself further. Once the team was completed with Clemens — who brings a clear lens for organization building — and iterated towards the core idea behind Langfuse during YCombinator, we knew that this was the right point in time to partner with them. All three of them are obsessed with their users and customers, which is reflected in the level of engagement they have in their community — which was a clear signal for us. So now: Let’s build!

Authors