03/03/2026

AI

Adding a Security Layer to the AI Coding Stack

Introducing AURI: the security intelligence layer AI coding agents have been missing.

AI coding agents are no longer a novelty. They’re writing, reviewing, and shipping production code across every major enterprise. The tooling has matured fast. But the security layer around these agents hasn’t kept pace, and that gap is widening with every commit.

Companies want to move fast with AI coding agents and do so responsibly, with confidence that the generated code is secure.

That’s why we’re proud to partner with Varun Badhwar and the Endor Labs team on the launch of AURI, the security intelligence layer for AI coding agents and developers.

Giving AI coding agents security context

We’re at an inflection point where AI coding agents are moving from productivity tools to autonomous engineering teammates, and with that comes an entirely new security surface that the legacy application security industry was never built to address.

The content here should not be viewed as investment advice, nor does it constitute an offer to sell, or a solicitation of an offer to buy, any securities. The views expressed here are those of the individual Lightspeed Management Company, L.L.C. (“Lightspeed”) personnel and are not the views of Lightspeed or its affiliates; other market participants could take different views.

Unless otherwise indicated, the inclusion of any third-party firm and/or company names, brands and/or logos does not imply any affiliation with these firms or companies.

Enterprise security teams don’t need another scanner. They need a verifiable, reproducible, and independent security intelligence layer — three properties that matter more than ever in the agentic era.

Independent means the tool verifying your code’s security isn’t the same tool that wrote it. If a coding agent writes the code and then tells you it’s secure, you’re relying on the same model, the same context window, the same blind spots. Enterprise engineering and security have always demanded separation of concerns, where the developer who writes the code doesn’t sign off on the security review. The same principle applies to agentic coding.

Reproducible means the same code produces the same results every time. AI models are probabilistic by nature, meaning you can run the same prompt twice and get different answers. That’s acceptable (and even desired) for problem-solving during code design and generation. It’s not acceptable for security, where a missed vulnerability could mean a breach, and a false positive wastes hours of engineering time.

Verifiable means every finding comes with evidence. Not a probabilistic guess, but deterministic analysis grounded in reachability, data flows, and application architecture. Security teams need to trust that a clean scan means clean code, and that a flagged vulnerability is real.

How AURI helps

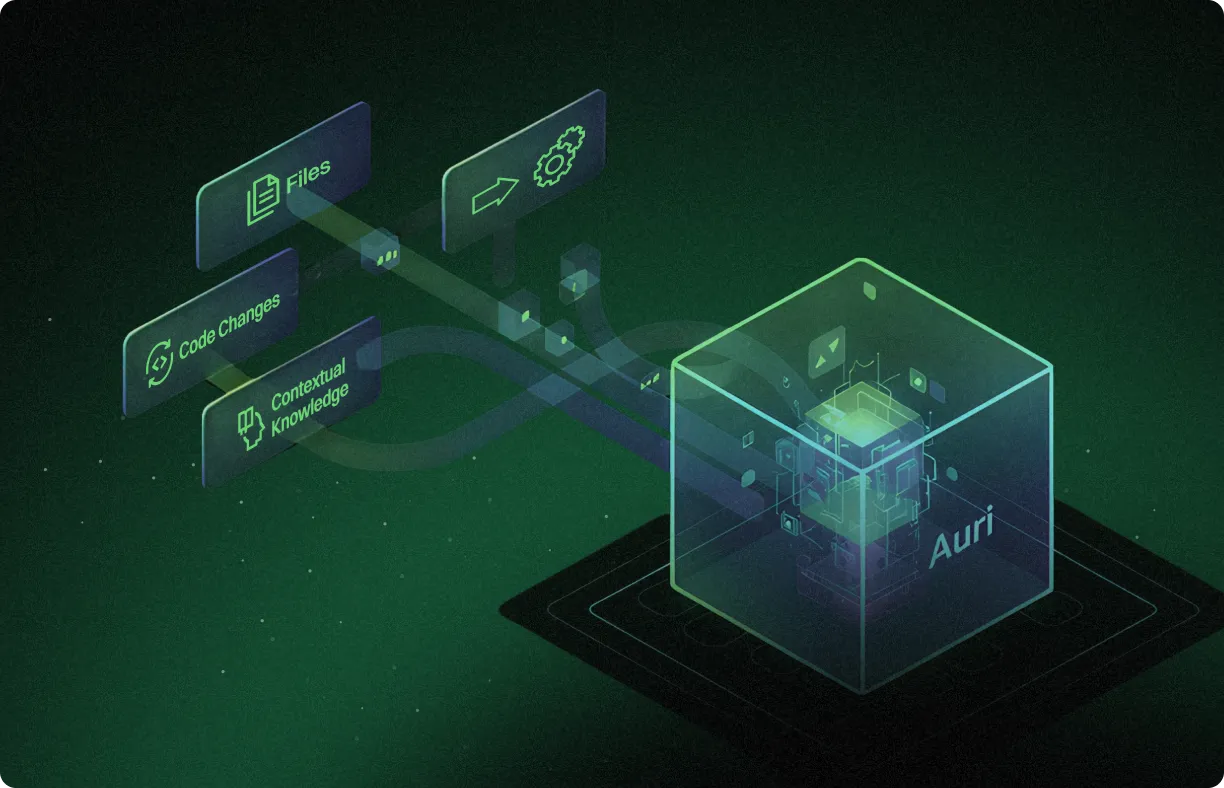

AURI by Endor Labs provides the missing security intelligence layer for agentic software development. It works across every major AI coding agent and Integrated Developer Environment (IDE), bringing a deep understanding of each codebase, its dependency graph, and the broader threat landscape.

What sets AURI apart is its approach. Rather than relying on brute-force LLM scanning, AURI’s code context graph enables its agents to navigate codebases intelligently, understand semantic meaning, track data flow, and assess security posture. The results speak for themselves: up to 95% noise reduction across code, dependencies, and container images, and 83% fewer blocked pull requests from security alerts.

Why we’re backing Endor Labs

The combination of speed and safety is what lets the industry scale responsibly. Enterprises shouldn’t have to choose between shipping fast and shipping secure. With AURI, they don’t. This is why companies like Glean, Mysten Labs, Netskope, and Rubrik turn to Endor Labs to move fast while staying safe.

We’ve seen this pattern before. When a critical infrastructure category emerges, the companies that build purpose-built products for practitioners — not features bolted onto existing platforms — are the ones that define the category. Endor Labs is doing exactly that for application security in the agentic era, and it’s why we’re proud to back them.

Endor Labs has made AURI’s Skills plugin, MCP, and CLI free for all developers,putting security intelligence directly into the hands of the people who need it most.

Learn more about AURI or get started for free.

The content here does not constitute an offer to sell or a solicitation of an offer to buy any securities or investment advisory services.

The views expressed are those of the authors and do not necessarily represent the views or opinions of Lightspeed. Other market participants could take different views. Unless otherwise indicated, the inclusion of any third-party firm and/or company names, brands and/or logos are for representational purposes and does not imply any affiliation with these firms or companies and also does not imply their endorsement of the views expressed by the authors.

Certain information contained herein is based on information from various sources prepared by third parties. While such sources are believed by Lightspeed to be reliable, neither Lightspeed nor its affiliates assume any responsibility for the accuracy or completeness of such information, and such information has not been independently verified by Lightspeed. For more details please see https://www.lsvp.com/legal.

Authors